When considering data collection in sport, it can be quite easy to convince ourselves that we don’t need to be doing it, or that we just don’t have the means to do it.

While I am a strong proponent for using objective data to our advantage, I can still relate: the whole data thing can seem like a cumbersome process from the outside. Just the words, data collection, can be intimidating in their own right – and understandably so – let alone actually attempting to answer for the what, when, and how of the matter.

Then there are the perceived barriers to entry: what if you we don’t have best or most convenient technology? Or any technology at all? Not to mention a staff member with a sports science degree or background.

For all of these reasons, I can personally understand by getting start at collecting data in your sport can seem overwhelming. But, it doesn’t have to be.

Yes, getting great at data collection, maintenance, analysis, and monitoring can certainly be challenging. However, collecting meaningful information to answer your sport-related questions isn’t about being great – it is about being consistent. And, to be consistent we have to understand the value that collecting data can bring to our knowledge of the game, and to the coaching of our athletes.

Thus, the purpose of this four-part series is to not only provide the why behind data collection in your sport (and at your current level of athletics), but also to de-threaten it so that you, your staff, and your players can feel comfortable as you dip your toes into the water.

Part I – Where to Start? Quantify the Game

Part II – coming soon

Part III – coming soon

Part IV – coming soon

Where to Start? Quantify the Game

“Measure what matters” is an often used adage when discussing the importance of monitoring performance data or training progress. We also hear people reiterate the importance of being evidence-based or evidence-led in our practices. Both of these nuggets are undoubtedly critical when developing athletes.

However, what if we don’t know what is important — where do we even begin to repeatedly measure/monitor? This is where simply beginning to collect data at all can provide an awful lot of value in the early goings of your “data-driven” shift:

Rather than guessing what matters in your sport, or attempting to monitor as much as possible, or copying what others are doing without knowing their own contexts… why not make a diligent effort to take stock of what actually happens in your sport with your players?

This method might be a bit of a slow build: it takes time and consistent effort to build a database of information that can help us answer questions. But, in this case, I can assure you that slowing down now can only help us speed up down the line.

Whether it be in practice and/or competition, by collecting consistent data over a period of time we can gain a better understanding of the actions and outcomes that occur most often in our sport (and thus, are probably most important to understand on a deeper level). You might even be able to find associations between certain outcomes and other events.

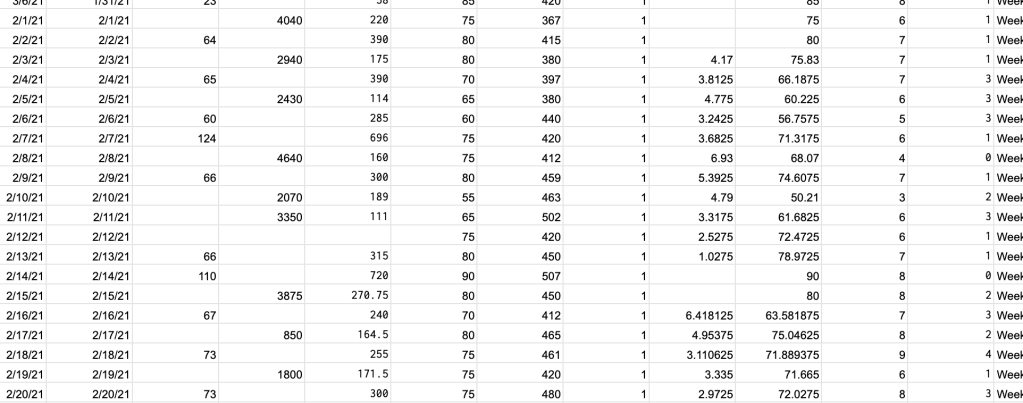

A hypothetical example: if you are collecting a simple metric such as how many sprints players have in practice and games during the competitive season and the off-season (call it Sprint Count), as well as a player wellness metric such as their self-reported Energy each day, you could potentially learn many things:

- You might observe that every time a player gets “a lot” of sprints in a day or week, their energy drops the next day

- You could also see that the average game involves 5 sprints (maybe this is more than you ever assumed), with one position in particular getting substantially more sprint efforts than the rest

- You could even find out that your typical in-game sprint-load might be 5 sprints, but your daily off-season Sprint Count also peaks at 5, which satisfy the average, but what about the worst case scenario? (e.g. a player gets 9 sprints in a game)

By consistently collecting even just two simple metrics, we can learn an abundance about our players and the game itself. In the hypothetical scenario above, we have the potential to further our knowledge of…

- Our Players’ Response – energy dips with higher workload

- Our Game’s Demands – number of sprints per game, for the group and each position

- The Appropriateness of Our Preparation – how much workload is being prescribed to prepare for the demands of the game

While we will touch dot point #1 in subsequent posts, I highlight all three initially to illustrate just how much value can be found in simply taking the time and doing the forward-planning to log events or observations from your sport.

So much can be learned by quantifying the sport.

Being “Evidence-Led”

There is so much that we might suspect to be true of our game, but until we attempt to put some semblance of objectivity behind it all, the beliefs we hold are really just gut feelings born of experience and sinulgar perspectives. Which, in reality, are still valuable. But, imagine the power behind both experiential learning and that which comes from quantifying the game on a granular level? A sailor with a lifetime of experience is invaluable; but a lifelong sailor who is also armed with a GPS? Well, she is darn near infallible, don’t you think?

And, this is what being evidence-led is really all about: using all of the evidence at our current disposal, while also reaching for more: to learn, calibrate, and grow our perspectives so that our our decision-making is constantly being fine-tuned to handle anything the game throws at us. In more simple terms, it is using both your intangible experiences and that which can be measured to be our best coach for the team.

One way to be evidence-led is to consult the literature – the scientific research – involving your sport. This can be an alternative to doing your own data collection, however it will have its limitations: for starters, while the most highly-rated literature is conducted on a sample of people (hopefully athletes, but not always the case) that are representative of the population with which we work. In my particular case, to find the most applicable literature to my population, I would personally be looking for that which involves 17-to-35 year old elite baseball players (preferably professional). However, as you might imagine, that literature is harder to come by than, say, studies involving the recreational athletes, college students, sedentary adults, and the likes.

Either way, the sample in any study can never truly be the same as your pool of athletes. There is still an indescribable amount of value in consulting the literature, but we must always work under the assumption that the findings in any given study may not be wholly applicable to our athletes.

Additionally, the literature in your given sport (or with your level of athletes) may still be in the early stages of its evolution, with few (if any) highly-rated studies to go by. If, for example, you have ever dug into the literature on the demands of baseball (especially on workload related topics) you would have certainly noticed that there is scant literature relative to the likes of soccer.

Thus, collecting data on your own can not only supplement your review of the literature, but it can make up for where the literature may be lacking (in terms of sheer quantity, or in the sense of applicability to your population). Finally, but not the least bit unimportant: if you don’t already have access to a database, it can be a challenging and quite expensive endeavor.

Meanwhile, when paired with a plan, a simple pen and paper can be a relatively cheap way to work toward understanding your sport better than most of your competitors.

What About All of The Technology?

Let me reiterate a very important point from the last passage: all it really takes to collect and harness the power of data is a pen and paper (or a spreadsheet, of course!) and a plan.

Technology is fantastic for making the data collection process more convenient – as millions of data points across hundreds of metrics can be collected in just one session. Yes, what you are capable of learning when you have technology – such as motion capture, heart rate monitoring, or GPS tracking – is nearly unlimited. However, what you will actually learn is wholly dependent on the resources and faculties that you and your staff have to maintain, aggregate, and analyze that heap of data. And, while it may be precise, precision means very little if you don’t have a target in mind; it is all about the questions you ask of the data – especially when you have a lot of it. But finding the right questions after the fact can be an overwhelming process compared to having a question going in and capturing only the information that you believe can help you solve it, all within the means of your current resources.

The more primitive means of data collection on the other hand – a pen and paper, a stopwatch, a Google Doc Survey, your own eyes – well, they may not be the most precise, however I have always been of the believe that we would rather be a few literal yards off in our estimation than be a metaphorical mile off. In other words, we would prefer to have a good estimation rather than no estimation at all.

Was that run hard enough to be a sprint? I am not sure… let’s count it! I get it, this isn’t precise. But, I would rather be wrong by a rep or two than to not have the slightest idea of what the game demands or what my players are experiencing at all.

And for what these simple forms of data collection lack in terms of precision, they make up for in repeatability and consistency. It will not take sport dedicated sport scientist to run a clicker that counts sprints, nor would it take much of a data analyst to run some averages over time.

To begin collecting data, all it takes it the forward-planning to prepare for the task, the diligence to see it through, and the patience to wait for enough data to come in over time for it to be usable or insightful.

Respectfully,

Ryan

Leave a comment